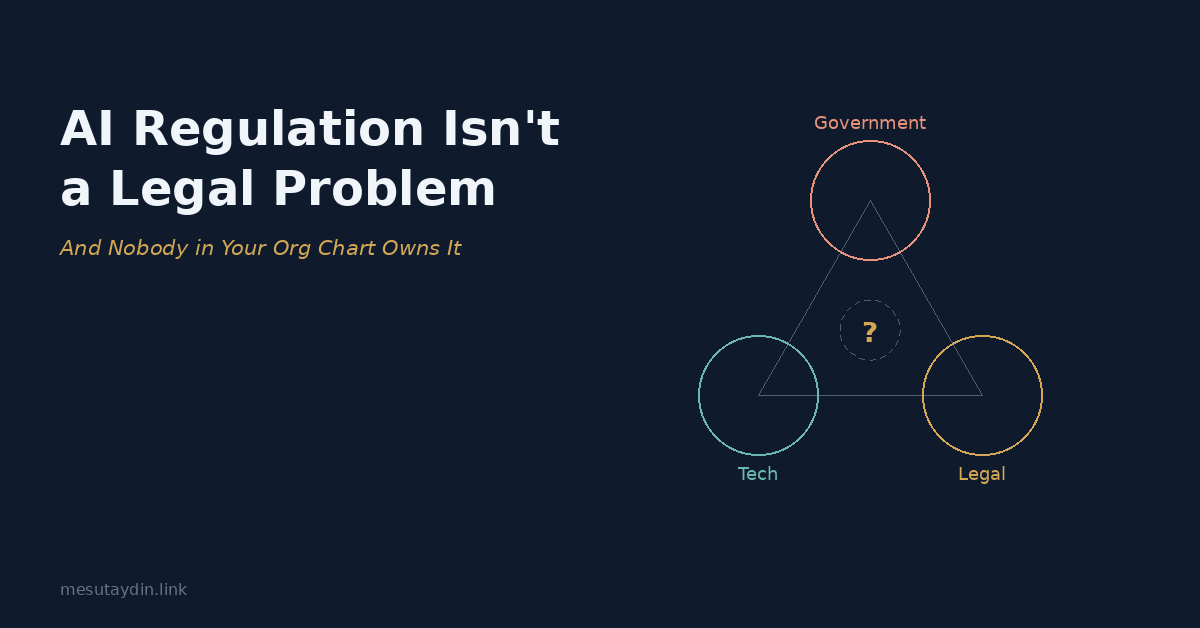

AI Regulation Isn't a Legal Problem — And Nobody in Your Org Chart Owns It

By Mesut Aydın

---

A European fintech is preparing to launch an AI-powered credit scoring product in Turkey. The product works. The market is ready. Then three things happen at once.

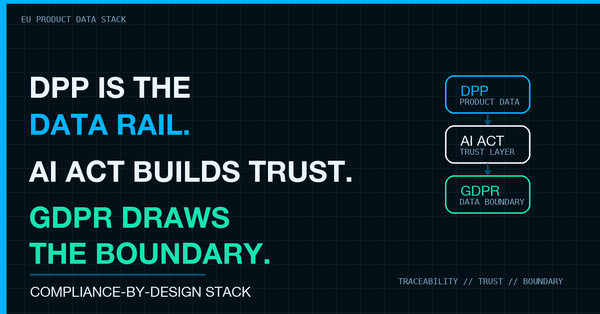

Turkey's banking regulator, BDDK, requires that all customer financial data stays within Turkish borders. The AI model, however, was trained on datasets hosted on AWS servers in Frankfurt — datasets that fall under the EU's General Data Protection Regulation. And the EU AI Act, which entered its enforcement phase in 2025, classifies credit scoring AI as "high risk," triggering a separate set of obligations around transparency, human oversight, and documentation that neither the Turkish nor the German legal teams had fully mapped.

The company's general counsel calls an emergency meeting. Legal understands Turkish banking regulation but has never read the AI Act's Annex III. The CTO understands the model architecture but doesn't know whether "data localization" means the training data, the model weights, or the inference outputs — because the answer depends on which regulator you ask. The government affairs team has relationships in Ankara, but their mandate has never included algorithmic compliance.

Everyone looks around the table.

Nobody owns this.

---

The Problem Behind the Problem

This isn't a story about one company. Variations of this scene play out quarterly — sometimes weekly — in organizations operating across regulatory jurisdictions. And the pattern is always the same: not a knowledge gap, but an architectural gap.

Every function at the table holds a piece of the puzzle. Legal knows the law. Engineering knows the system. Government affairs knows the stakeholders. But the puzzle itself — the question of how a single AI product simultaneously triggers obligations under three different regulatory frameworks, in three different countries, enforced by three different authorities with three different philosophical approaches to technology governance — that puzzle doesn't belong to anyone.

It sits in the white space between departments. And white space doesn't appear on org charts.

When Regulations Stop Being Parallel

For most of the last two decades, regulatory compliance has been a manageable exercise in parallel processing. You had data protection over here, financial regulation over there, trade compliance in another lane. Different teams, different frameworks, different rhythms. They occasionally overlapped, but rarely collided.

AI changed that.

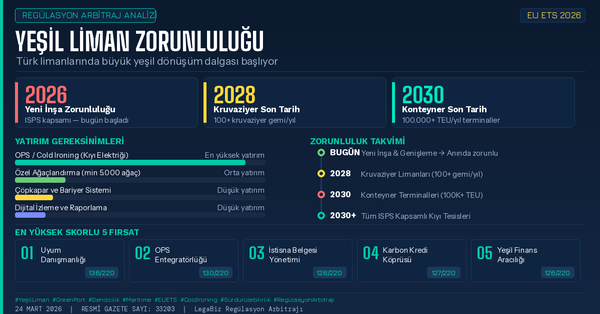

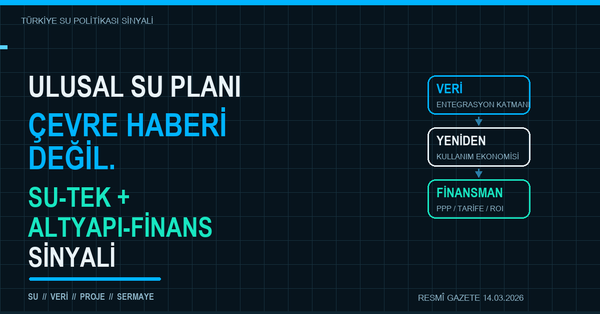

When the OECD updated its Due Diligence Guidance for Responsible Business Conduct to explicitly address AI systems in early 2026, it introduced a requirement that would have been unthinkable five years ago: traceability of training data provenance across the entire value chain. Not just "where is the data stored" but "where did it come from, who curated it, what biases were embedded in the selection, and can you document the chain of custody?"

Ask your machine learning team whether they can answer that question for every dataset used in fine-tuning. Most cannot — not because they're negligent, but because the question was never part of their workflow. It was nobody's job to ask.

Now layer on the EU AI Act's risk classification system: if your AI product falls under "high risk" (and the list includes credit scoring, hiring tools, insurance pricing, critical infrastructure management, and biometric identification), you need conformity assessments, technical documentation, human oversight mechanisms, and post-market monitoring. Each of these touches a different internal function. Each function reports to a different C-suite executive.

Now layer on the fact that Turkey, your largest emerging market, has no comprehensive AI law yet — but has a robust data protection framework (KVKK) that doesn't neatly align with GDPR, a telecommunications authority (BTK) with growing interest in algorithmic oversight, and a competition authority (Rekabet Kurumu) that has started investigating algorithmic collusion.

Same product. Same quarter. Three jurisdictions. Three regulatory philosophies. Zero unified ownership.

The Questions Nobody Is Asking

Here is what makes this genuinely difficult — and what separates this from a compliance checklist problem.

When PSD3 (the EU's upcoming third Payment Services Directive) enables AI-driven payment initiation, but the AI Act classifies financial decision-making AI as high-risk, who in your organization reconciles two EU frameworks that don't talk to each other? This isn't a legal question. It's a design question, a product question, and a government engagement question — all at once.

When your open banking APIs process data that is simultaneously subject to Turkey's BDDK localization requirements, the EU's data adequacy framework, and the OECD's new due diligence standards, who determines whether "data localization" means keeping the raw data in-country, keeping the model in-country, or keeping the inference pipeline in-country? Because the answer changes depending on which authority you're speaking to, and getting it wrong doesn't just mean a fine — it means market access.

When the UAE launches its permissive AI regulatory sandbox while the EU tightens its conformity requirements and Turkey adopts a wait-and-see approach, who develops your company's AI regulatory strategy across all three? This isn't three separate compliance projects. It's one strategic architecture that needs to account for fundamentally different approaches to the same technology.

When an OECD member state updates its national AI strategy and your competitors start pre-positioning their compliance investments to gain first-mover advantage in that market, who spots the arbitrage window? By the time it becomes a legal memo, the window is often already closing.

These questions don't land neatly in any existing inbox.

The Architecture of the Gap

The instinct in most organizations is to solve this by expanding the legal team. Hire an AI lawyer. Add a tech-savvy associate. But this misdiagnoses the problem.

A lawyer can tell you what the AI Act requires. A lawyer cannot sit across from a Turkish regulator and explain why your model architecture actually satisfies the spirit of data localization requirements even though the training data transited through Frankfurt. That requires understanding the model. It requires understanding the regulator's institutional incentives. It requires understanding what the regulator's next move will likely be, based on the political economy of Turkish tech policy. And it requires the ability to translate between three languages that rarely overlap: the language of engineering, the language of law, and the language of government.

This is not about adding headcount to an existing function. It is about recognizing that a new function has emerged — one that operates at the intersection of legal, technical, and governmental domains, and whose primary skill is not expertise in any single domain but the ability to translate between all three under conditions of regulatory uncertainty.

Some organizations are beginning to call this "AI governance." Others fold it under "regulatory strategy" or "technology policy." A few have created hybrid roles that report to the general counsel but sit in product meetings and fly to Brussels. The nomenclature hasn't settled. But the need is no longer theoretical.

What This Isn't

This is not an argument for a new C-suite title. Organizations don't need more titles; they need the right capability in the room when three regulatory frameworks collide over a single product decision.

This is also not an argument that lawyers, engineers, or government affairs professionals are insufficient. They are essential. But the assumption that one of these existing functions can simply "stretch" to cover the intersection — that a good lawyer can learn enough AI to manage algorithmic compliance, or that a smart CTO can navigate OECD policy — underestimates the depth of each domain and the speed at which all three are moving simultaneously.

The question is structural: does your organization have a function — however you name it — that is designed to operate in the seams between legal, technology, and government?

If the answer is no, you're not unusual. Most organizations are in the same position.

But the regulatory calendar doesn't wait for org chart redesigns. The EU AI Act's first compliance deadlines have already passed. The OECD framework is being adopted by member states. Turkey's AI strategy is moving from white paper to legislative drafting. And every quarter, the number of jurisdictions with active AI governance frameworks increases.

The seat at that table is empty in most organizations.

The question is how long it stays that way.

---

Mesut Aydın writes about AI governance, regulatory strategy, and the intersection of technology policy and international affairs at mesutaydin.link.