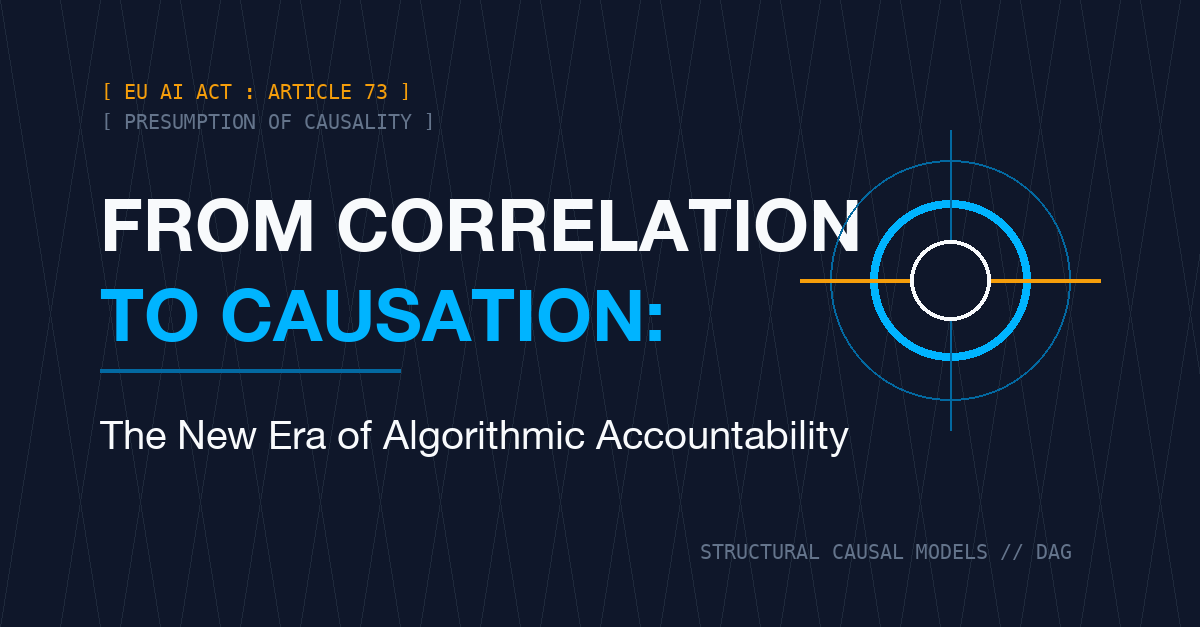

Causal AI and Algorithmic Accountability: From the Tyranny of Correlation to the Law of Causality

"Modern AI systems are genius at telling us 'what' an event is; however, accountability is only as strong as the system's ability to answer 'what if?' (counterfactuals)."

1. Introduction: The Epistemological Fracture of Predictive Power

The artificial intelligence world has spent the last decade building a colossal correlation machine. Deep learning and Large Language Models (LLMs) have captured patterns across billions of data points, whispering the "next word" or the "next consumer behavior" to us with unbelievable accuracy. Yet, these systems have remained silent in explaining the reason behind their outputs.

This silence has turned into a massive accountability crisis at the exact moment algorithms entered courtrooms, healthcare diagnostics, and credit scoring systems. To the question, "Why did you make this decision?", the answer, "Because the weights in a multidimensional space summed up this way," no longer satisfies legal professionals or ethics boards.

---

2. The Scientific Construction of Causality: Judea Pearl and do-calculus

The technical foundation of accountability begins with the "Ladder of Causation" developed by Turing Award winner Judea Pearl. Most of today's AI systems are trapped on the first rung: Observation. The question "If I see X, should I expect Y?" is purely based on correlation.

Real accountability, however, lies on the higher rungs:

- Intervention: "If I manipulate the system toward X (do(X)), what is the deviation in the output?"

- Counterfactuals: "If I had chosen Z instead of X, would the result still be Y?"

To answer these questions, Causal AI employs Structural Causal Models (SCMs) and do-calculus. SCM does not view the system merely as a black box; it maps the causal arrows (Directed Acyclic Graphs - DAG) between variables. This transparency allows for the mathematical isolation of the "accomplice" (confounders) in an algorithm's decision-making process.

---

3. Legal Milestones: AI Act and Liability Directive

The law traditionally lags behind technology; but this time, the European Union has closed the gap by placing the principle of "Causality" at the heart of its legislation.

AI Act Article 73: Notification of Causal Link

Article 73 of the Artificial Intelligence Act sets a critical threshold while imposing an obligation on providers of high-risk AI systems to report serious incidents: “immediately after the provider has established a causal link between the AI system and the serious incident...” This article forces companies to not just list incidents, but to possess an auditing infrastructure capable of proving causality.

AI Liability Directive: The Shift in the Burden of Proof

Perhaps the most revolutionary step was taken with the AI Liability Directive. Because it is nearly impossible for a victim to prove how a complex algorithm harmed them, the directive introduced the system of "Presumption of Causality." If a system fails to comply with AI Act rules (e.g., data governance or transparency) and damage occurs, the court will proceed under the presumption that "the AI system caused the damage" until proven otherwise.

This makes "Causal AI" not a luxury, but a defensive shield (defensive tech).

---

4. Audit Architecture: The Transition from SHAP to SCM

Traditional "Explainable AI" (XAI) tools (SHAP, LIME) offer colorful maps showing how much a model "believed" in which feature. But this is not an explanation; it is merely a score analysis.

For Algorithmic Accountability, Causal Architecture Provides:

- Robustness Analysis: It measures whether the system's reactions to minor data deviations are truly causal.

- Bias Detection: If the reason for a loan rejection appears to be a "ZIP code", the system proves via causal graphs whether this code is actually acting as a proxy for "race" or "income group".

- NHAM and Social Media Auditing: Next-generation models, like the Network Hawkes Auditing Model, are used to measure the causal contribution of platforms' algorithmic interventions (Engagement Maximization) to social polarization and disinformation.

---

5. The Socio-Technical Paradox: The Limits of Pragmatism

Researchers like Poechhacker and Kacianka (2021) remind us that causality is not just a mathematical formula, but a socio-technical perspective. According to this view, inspired by pragmatist philosophy, the validity of a causal model depends not only on the accuracy of the arrows in the graph, but on how well it aligns with the norms of the social system (legal rules, professional ethics, local values) in which it is applied.

Therefore, an accountable AI design is not just an engineering project; it is the establishment of a joint "Inquiry Structure" spanning the disciplines of Law, Sociology, and Data Science.

---

6. Strategic Conclusion and Roadmap

Causal AI is the only bridge that makes the "intent" and "impact" of algorithms interrogable. For decision-makers, the roadmap has become clear:

- Inventory: Which of your decision processes have you delegated to AI?

- Causal Mapping: Have you defined the "Why" arrows (DAG) behind these decisions?

- AI Act Compliance: Under Article 73, do you have the simulation capacity to present a "causal link" report at the moment of an incident?

- Accountability Index: Are you periodically measuring your own Algorithmic Accountability Index (AAI)?

Correlation tells us the past; but only Causality gives us the power to manage the future and stand behind our decisions.

---

Bibliography and Academic Foundations

- Pearl, J. (2009). Causality: Models, Reasoning, and Inference. Cambridge University Press.

- Poechhacker, N., & Kacianka, S. (2021). Algorithmic Accountability in Context. Socio-Technical Perspectives on Structural Causal Models. Frontiers in Big Data.

- European Commission. (2022). Proposal for a Directive on adapting non-contractual civil liability rules to artificial intelligence (AI Liability Directive).

- Dongre, D., et al. (2025). Network Hawkes Auditing Model: A Causal Framework for Detecting Algorithmic Influence in Social Media.

- Touitou, M. (2025). Algorithmic credit allocation and the rise of financial inequality: Evidence from U.S. fintech platforms. Acta Oeconomica.

- Kacianka, S., & Pretschner, A. (2018). Understanding Explainability through Structural Causal Models. ArXiv.