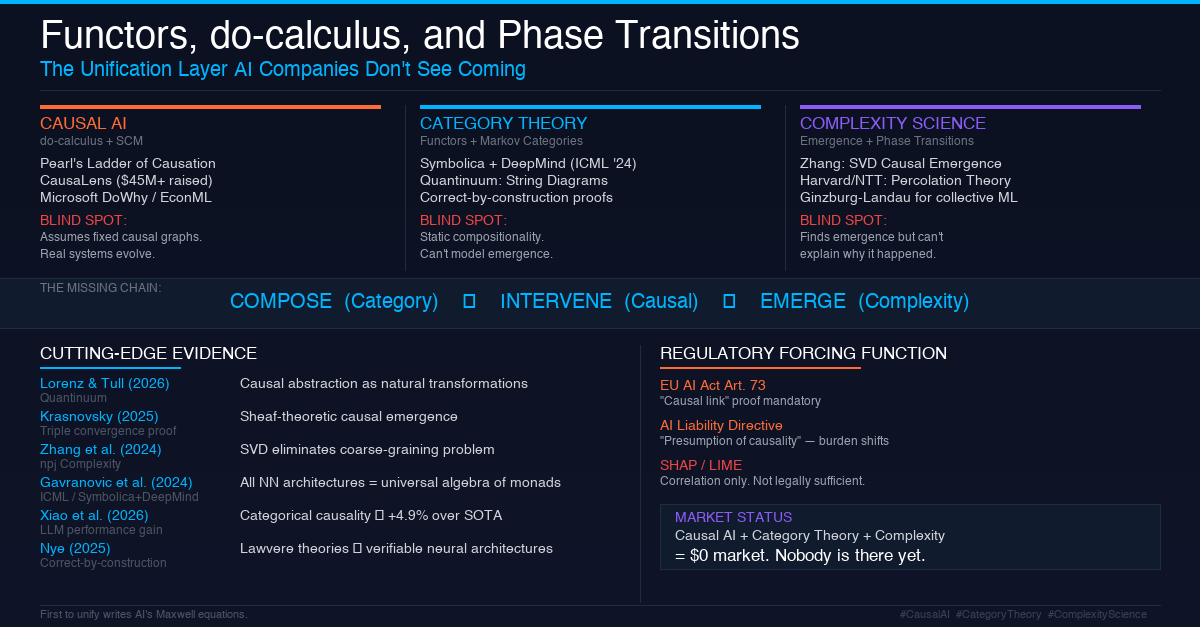

Functors, do-calculus, and Phase Transitions: The Unification Layer AI Companies Don't See Coming

The Three Revolutions Nobody Connected

The AI industry is living through three simultaneous revolutions -- in separate silos, blissfully unaware of each other.

Revolution 1: Causal AI. Judea Pearl gave us do-calculus and structural causal models. Companies like CausaLens raised $45M+ to bring causality to enterprise. Microsoft shipped DoWhy and EconML. The promise: AI that answers "why," not just "what."

Revolution 2: Categorical AI. Symbolica AI and Google DeepMind co-published the landmark ICML 2024 paper arguing that category theory is "an algebraic theory of all architectures." Quantinuum's Bob Coecke group formalized causal models as string diagrams. The promise: AI with compositional guarantees -- if the parts are correct, the whole is provably correct.

Revolution 3: Complexity-Aware AI. Jiang Zhang's group at Beijing Normal University built the Neural Information Squeezer and SVD-based Causal Emergence theory. Harvard/NTT showed transformers exhibit phase transitions following percolation theory. The promise: AI that understands emergence -- when the whole becomes more than the sum of its parts.

Here's the problem: no company, no lab, no framework has unified all three.

CausaLens does causality without composition. Symbolica does composition without emergence. Beijing Normal does emergence without structural causality. They're all building one leg of a three-legged stool.

This article argues that the convergence of these three fields -- what I call Compositional Causal Emergence (CCE) -- is not an academic curiosity. It's AI's next paradigm shift, and the window for first-mover advantage is open right now.

---

The Blind Spots: What Each Silo Can't See

Causal AI's Frozen Map Problem

Every causal AI system today assumes a fixed causal graph. You draw a DAG, label the nodes, define the arrows, then run do-calculus on it. This works beautifully in controlled environments.

But real-world systems don't have fixed causal structures. In financial markets, the causal relationships between variables shift during crises. In healthcare, a patient's causal response to treatment changes as comorbidities emerge. In climate systems, feedback loops create entirely new causal pathways that didn't exist a decade ago.

Current Causal AI is a GPS navigation system that draws the map perfectly but can't handle the fact that the roads keep changing.

Category Theory's Earthquake Problem

Categorical approaches deliver stunning compositional guarantees. If module A is verified and module B is verified, their composition A + B is provably correct. This is the "correct-by-construction" dream.

But complex systems violate this assumption constantly. The whole is more than the sum of its parts. Non-linear interactions, feedback loops, and emergent behaviors mean that perfectly verified components can produce unpredictable system-level behavior. A building made of flawless LEGO bricks still needs earthquake engineering.

Complexity Science's Forensics Problem

Causal emergence research can detect that macro-level dynamics are causally stronger than micro-level dynamics. Zhang's SVD framework (2024, npj Complexity) made this computationally tractable. But complexity science can find the earthquake -- it just can't tell you which column broke, or what would have happened if you'd reinforced it.

Without structural causal models, emergence detection is observation without intervention. Without compositional guarantees, emergence analysis doesn't scale to modular systems.

---

The Convergence: Three Operations, One Chain

CCE combines three fundamental operations into a single executable chain:

COMPOSE (Category Theory) -- Combine causal sub-models with structural guarantees using Markov categories and monoidal composition.

INTERVENE (Causal AI) -- Calculate the effect of interventions in the composed model using SCMs and do-calculus, including counterfactual reasoning.

EMERGE (Complexity Science) -- Measure whether macro-level causal effects are stronger than micro-level effects using effective information and SVD decomposition.

The critical innovation: these three operations can be composed on top of each other:

EMERGE after INTERVENE after COMPOSE

Meaning: compose the parts, intervene in the whole, then measure whether the intervention changed the emergent structure itself.

This chain has never been formalized in any existing framework.

---

The Evidence: This Convergence Is Already Beginning

The building blocks exist. They just haven't been assembled:

Lorenz & Tull (2026, Quantinuum) formalized causal abstraction -- moving between micro and macro causal descriptions -- as natural transformations in category theory. This directly bridges causality and composition. Missing piece: no complexity metrics, no emergence detection.

Krasnovsky (2025) published the closest existing work: sheaf-theoretic causal emergence combining categorical structures (sheaves), causal metrics (effective information), and complexity (emergence) for distributed systems analysis. Missing piece: applied to microservices and power grids, not yet to AI/ML models.

Zhang et al. (2024, npj Complexity) eliminated the coarse-graining selection problem in causal emergence using SVD of transition matrices. This makes emergence practically computable. Missing piece: no compositional framework, doesn't scale to modular systems.

Gavranovic et al. (2024, ICML, Symbolica + DeepMind) showed all neural network architectures can be expressed in the universal algebra of monads. Missing piece: no causality, no complexity -- purely structural.

Xiao et al. (2026) proved that categorical causal formalization improves LLM performance by 4.9% over state-of-the-art. This isn't theory anymore -- it's measurable performance gain.

Nye (2025) established a bijection between Lawvere theories and neural architectures, producing networks that are mathematically incapable of violating specified logical constraints. Correct-by-construction AI is not a dream; it's demonstrated.

---

Why This Matters Now: The Regulatory Forcing Function

The EU has inadvertently created the most powerful market pull for this convergence.

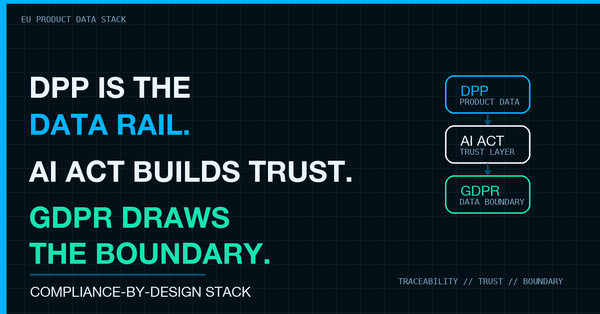

AI Act Article 73 requires high-risk AI providers to demonstrate a "causal link" between their system and serious incidents. Not correlation. Not feature importance scores. A causal link.

The AI Liability Directive introduces a "rebuttable presumption of causality." If an AI system fails to comply with AI Act rules and damage occurs, courts will presume the AI caused the damage until the provider proves otherwise.

This makes Causal AI not a luxury but a defensive shield. And here's what no compliance vendor has realized: SHAP and LIME -- the tools everyone uses for "explainability" -- measure correlation, not causation. They show that a loan rejection correlates with a ZIP code, but they cannot prove whether that ZIP code is acting as a proxy for race or income. Only structural causal models can make that distinction.

The company that delivers compositional causal explanations -- explanations that are mathematically guaranteed to be consistent across system components, that can prove causal links, and that can detect emergent risks before they materialize -- doesn't just solve a compliance problem. It sets the de facto technical standard for AI Act compliance.

---

The White Space: What Nobody Has Built

The market map tells a clear story:

| Capability | Who's Doing It | What's Missing |

|---|---|---|

| Causal AI | CausaLens, Microsoft DoWhy | No composition, no emergence |

| Categorical AI | Symbolica, Quantinuum | No causality metrics, no emergence |

| Complexity/Emergence | Beijing Normal, Santa Fe Institute | No structural causality, no composition |

| All Three Combined | Nobody | The entire framework |

No product, no tool, no startup occupies the center of this triangle. The entity that builds a "Compositional Causal Emergence Engine" -- a system that composes verified causal modules, runs interventions across scales, and monitors emergent behavior in real time -- occupies an entirely new category.

---

Five Multiplier Effects

1. Compliance Arbitrage. First-mover in compositional causal explanations becomes the technical standard for EU AI Act compliance. Estimated compliance cost reduction: 60-80% vs. ad hoc approaches.

2. AI Safety Beyond Empiricism. Current AI safety is either empirical (red-teaming) or statistical (adversarial testing). CCE adds structural guarantees: mathematical proofs that certain behaviors cannot emerge under specified conditions. This is a qualitatively different safety layer.

3. Interpretability as Legal Evidence. String diagrams aren't just pretty math. They produce visual causal audit trails that can serve as legal evidence in liability proceedings -- a "causal chain certificate" for the courtroom.

4. Cross-Domain Transfer. Functorial mappings preserve causal structure across domains. A causal model of drug interactions can be formally transferred to model financial contagion -- if the structures are isomorphic, the transfer is mathematically guaranteed.

5. Quantum-Ready Architecture. Markov categories encompass both classical and quantum systems. A CCE framework transitions to quantum causal inference with zero architectural changes when quantum hardware matures.

---

The Call to Action

Newton had three laws. Einstein unified them into spacetime. AI has three revolutions -- causality, compositionality, complexity -- waiting for their unification moment.

The pieces are on the table. Lorenz and Tull gave us categorical causal abstraction. Zhang gave us computable emergence. Gavranovic gave us categorical deep learning. Krasnovsky showed the triple convergence is possible.

What's missing is the engineering integration and the will to build it.

The white space is open. The regulatory wind is at your back. And the first team that writes AI's equivalent of Maxwell's equations -- unifying seemingly separate research programs into a single framework -- won't just publish a paper.

They'll define the next decade of AI.

---