The OECD Just Rewrote the Rules for AI Accountability — Here's What Every Business Needs to Know

Published February 2026 | Reading time: ~8 minutes

---

The global AI governance landscape just got a major upgrade.

On January 26, 2026, the OECD officially adopted the Due Diligence Guidance for Responsible AI — a landmark document that finally translates high-level AI principles into concrete, actionable steps for businesses operating anywhere in the AI value chain.

This isn't another policy paper gathering dust. It's a practical operating manual for the entire AI economy.

📎 Read the original OECD document here

---

Why This Document Matters

For years, AI governance has been caught in a frustrating paradox: everyone agreed on the principles (fairness, transparency, accountability, human oversight), but almost no one could agree on what those principles actually require in practice.

Companies were left to interpret vague frameworks on their own. Governments issued guidelines with no enforcement muscle. And the most consequential decisions — who gets hired, who gets a loan, who goes to jail — were increasingly made by systems that nobody was fully accountable for.

The OECD's new Guidance breaks this impasse by doing something deceptively simple: it applies the same responsible business conduct (RBC) framework that already governs multinational enterprises in mining, finance, and agriculture — to AI.

This is a masterstroke of regulatory design. Instead of inventing AI governance from scratch, the OECD leveraged 50 years of hard-won international business conduct standards and extended them into the digital economy.

---

What Is "Responsible Business Conduct Due Diligence" — and Why AI Needs It

Responsible Business Conduct (RBC) due diligence is the process by which enterprises identify, prevent, mitigate, and account for adverse impacts on people and the planet arising from their operations and value chains.

Think of it as corporate risk management — but instead of financial risks, you're managing human rights risks, labor risks, and societal risks.

This framework has been battle-tested across industries and is recognized by the UN Guiding Principles on Business and Human Rights, the ILO Tripartite Declaration, and the OECD Guidelines for Multinational Enterprises. It's not theoretical. Thousands of companies follow it today.

The new OECD guidance says: if you're in the AI business, this framework now applies to you too.

---

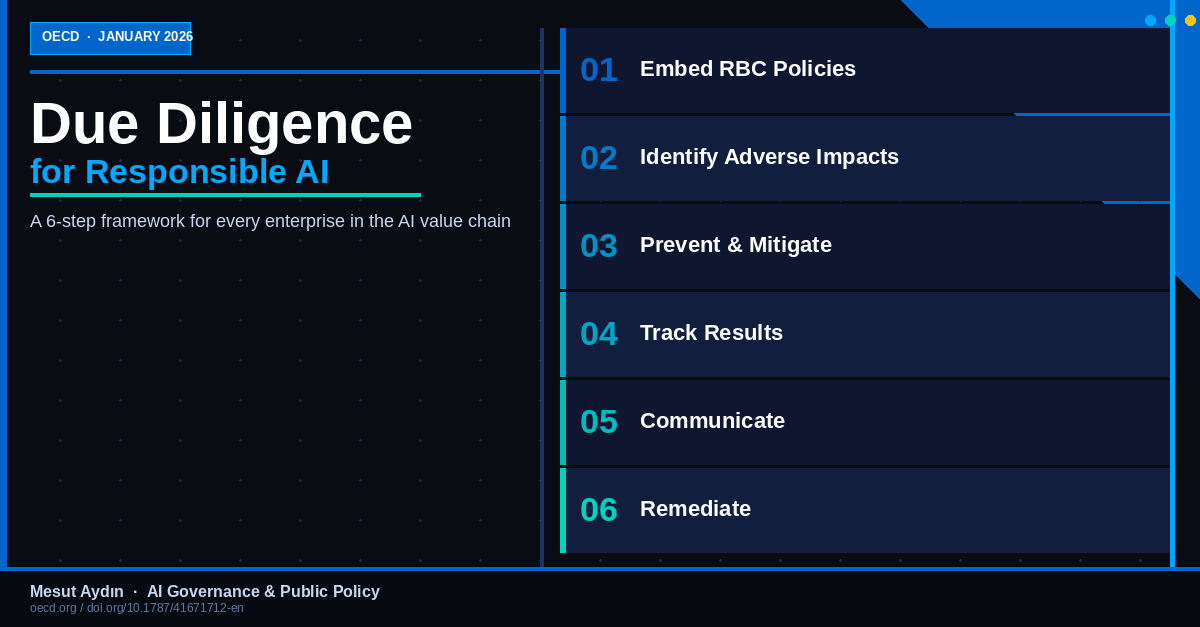

The Six Steps Every AI Enterprise Must Follow

The Guidance organizes due diligence into six sequential, interconnected steps. Think of them as a continuous cycle — not a one-time checklist.

Step 1: Embed Responsible AI into Your Policies and Management Systems

Before you can manage AI risks, you need to institutionalize the commitment. This means board-level accountability for AI impacts, a written policy covering your entire AI value chain, internal governance structures, and contractual requirements flowing down to suppliers and partners.

The key insight here is scope. Your responsibility doesn't end at the edge of your own company. If your AI system is trained on data scraped by a third party, or runs on compute infrastructure that causes environmental harm, you own part of that impact.

Step 2: Identify and Assess Actual and Potential Adverse Impacts

This is where serious analytical work happens. Companies must assess who is affected (workers, users, communities, vulnerable populations), what the potential harms are, and how severe and reversible they are. The Guidance provides a useful prioritization matrix: severity × likelihood × reversibility.

Not all risks require the same response. But willful ignorance — "we didn't know" — is no longer a defensible position.

Step 3: Cease, Prevent, and Mitigate Adverse Impacts

Once harms are identified, the obligation is clear: cease activities that directly cause harm, prevent potential harms before they materialize, and mitigate what you can't fully prevent.

The Guidance is particularly sharp on leverage. Even if you don't directly control a supplier causing harm, you may have leverage through contracts, purchasing decisions, or public disclosure. Using that leverage isn't optional — it's part of your duty.

Step 4: Track Implementation and Results

Good intentions without measurement are meaningless. The Guidance requires ongoing monitoring across the AI system lifecycle, feedback mechanisms that actually reach affected communities, and periodic reassessment — especially when systems are updated, scaled, or deployed in new contexts.

An AI system that performed well in testing may behave very differently at scale, in different cultural contexts, or when adversarial actors start probing its boundaries.

Step 5: Communicate Actions to Address Impacts

Transparency is not PR. The Guidance distinguishes between reporting to affected stakeholders, public disclosure, and proactive communication. Companies that communicate openly about their AI governance — including their failures and corrections — build far more durable trust than those that only publish glossy "responsible AI" reports.

Step 6: Provide for or Cooperate in Remediation

When harm has occurred, make it right. This means providing grievance mechanisms that affected parties can actually access, cooperating with legitimate remediation processes, and going beyond legal minimums when the severity of harm demands it.

The Guidance is explicit: if your AI system caused harm to thousands through discriminatory decisions, writing a check to an NGO is not remediation. The harm must be addressed at the source.

---

Who Is This Guidance For?

One of the most important aspects is its clarity on scope. The OECD identifies three distinct groups within the AI value chain — all three carry responsibilities:

Group 1 — AI Input Suppliers: Data providers, compute infrastructure companies, cloud platforms, semiconductor manufacturers. Upstream choices on data quality, energy consumption, and labor conditions have profound downstream consequences.

Group 2 — AI Developers and Deployers: Companies building foundation models, fine-tuning them, or deploying them in products and services. This group carries the heaviest due diligence burden.

Group 3 — AI Users: Enterprises using AI-powered tools — from HR software with AI resume screening to hospitals using AI for diagnostic support. You may not have built the system, but deploying it in consequential contexts comes with accountability.

---

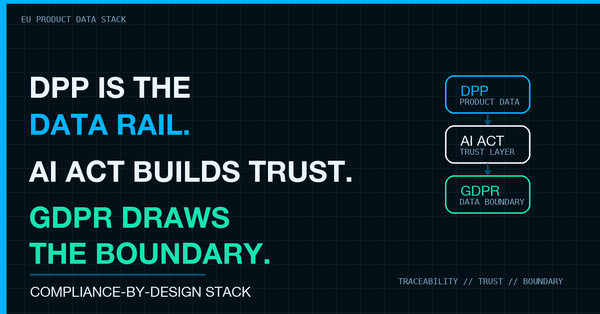

The EU AI Act Connection

The OECD Guidance explicitly references the EU AI Act's risk classification and requirements for high-risk AI systems: conformity assessments, technical documentation, human oversight mechanisms, and registration in the EU database.

This alignment is not coincidental. The OECD and European Commission coordinated closely. For global companies, this is good news: you don't need two separate compliance programs. Companies implementing OECD-compliant due diligence will be substantially prepared for EU AI Act compliance — and vice versa.

---

Why This Is a Strategic Opportunity, Not Just a Compliance Burden

The OECD itself makes this argument explicitly: responsible AI is a competitive advantage.

Companies with serious due diligence will catch problems before they become expensive crises, build genuine stakeholder trust, attract talent who want ethically governed organizations, access capital markets increasingly screened for ESG performance, and reduce regulatory, legal, and reputational risk.

The companies that struggle will be those treating AI governance as a checkbox exercise. When the first major AI-related harm lawsuit lands — and it will — courts and regulators will ask: "Did you have a serious process? Did you follow it? Did you document it?"

The OECD Due Diligence Guidance gives you the architecture for a serious answer.

---

What Should You Do Starting Monday?

- Map your AI exposure — What AI systems is your organization using, developing, or supplying? Who is affected?

- Assign accountability — Is there a named individual or body responsible for AI governance? If not, that's your first task.

- Assess your highest-risk systems — Apply severity × likelihood × reversibility to your top 3–5 most consequential AI applications.

- Review your supplier contracts — Do they include any AI governance requirements?

- Read the Guidance — 100+ pages, but one of the most practically useful AI policy documents produced to date.

📎 OECD Due Diligence Guidance for Responsible AI — Full Document

---

Final Thought

We are at a genuine inflection point in AI governance. The gap between "we believe in responsible AI" and "here is what we actually do" is closing — not because companies suddenly became more virtuous, but because the regulatory and legal infrastructure is catching up.

The question is no longer whether your organization will be held accountable for its AI impacts. The question is whether you'll be ready when that moment comes.

Mesut Aydın is a public policy professional with 21 years of experience in AI governance, trade regulation, and international policy. He has represented Turkey at the OECD in Paris. This article reflects his personal views.